"For nearly three decades, historians, journalists, and the public have relied on the Internet Archive to preserve news sites as they appeared online. Those archived pages are often the only reliable record of how stories were originally published. In many cases, articles get edited, changed, or removed-sometimes openly, sometimes not. The Internet Archive often becomes the only source for seeing those changes."

"The Times says the move is driven by concerns about AI companies scraping news content. Publishers seek control over how their work is used, and several-including the Times-are now suing AI companies over whether training models on copyrighted material violates the law. There's a strong case that such training is fair use."

"Whatever the outcome of those lawsuits, blocking nonprofit archivists is the wrong response. Organizations like the Internet Archive are not building commercial AI systems. They are preserving a record of our history."

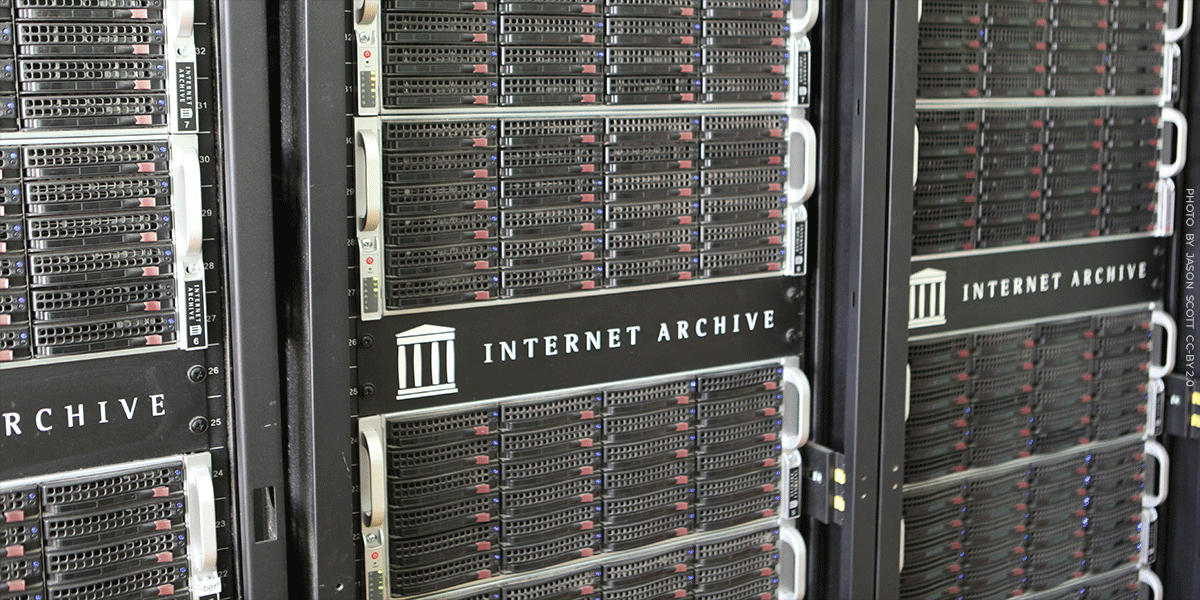

The Internet Archive, a nonprofit digital library preserving over one trillion web pages since the mid-1990s, faces blocking from major publishers like The New York Times and The Guardian. These publishers use technical measures beyond standard robots.txt rules to prevent the Archive's crawlers from accessing their content. While publishers cite concerns about AI companies scraping news for training data, blocking nonprofit archivists damages historical preservation. Archived pages serve as the only reliable record of how stories were originally published, as articles frequently get edited, changed, or removed. This preservation function differs fundamentally from commercial AI training, yet publishers pursuing lawsuits against AI companies are simultaneously cutting off access to nonprofit preservation efforts.

Read at Electronic Frontier Foundation

Unable to calculate read time

Collection

[

|

...

]