"While traditional attention mechanisms have proven remarkably effective, they often come with steep computational and memory costs. MLA reimagines this core operation by introducing a latent representation space that dramatically reduces overhead while preserving the model's ability to capture rich contextual relationships."

"In autoregressive generation (producing one token at a time), we cannot recompute attention over all previous tokens from scratch at each step - that would be expensive computation per token generated. Instead, we cache the key and value matrices to optimize the process."

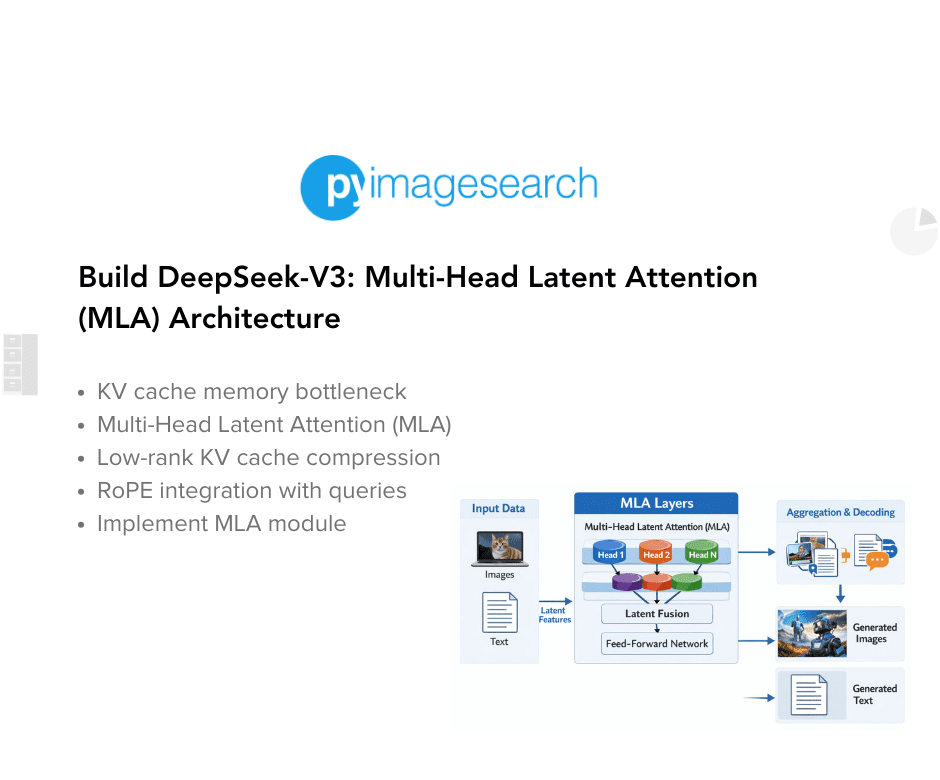

This tutorial continues a six-part series on building DeepSeek-V3 from scratch, focusing on Multi-Head Latent Attention (MLA) as a key innovation. Following foundational work on Rotary Positional Embeddings, this lesson addresses the memory bottleneck in Transformer inference where standard multi-head attention requires expensive computation during autoregressive token generation. MLA reimagines the attention mechanism by introducing a latent representation space that dramatically reduces computational and memory overhead while maintaining the model's ability to capture rich contextual relationships. The lesson combines theoretical explanation with practical implementation, advancing toward reconstructing the complete DeepSeek-V3 architecture through hands-on code examples.

#deepseek-v3 #multi-head-latent-attention #transformer-architecture #attention-mechanisms #model-optimization

Read at PyImageSearch

Unable to calculate read time

Collection

[

|

...

]