#natural-language-processing

#natural-language-processing

[ follow ]

#machine-learning #ai #artificial-intelligence #multi-token-prediction #large-language-models #user-experience

fromTechzine Global

4 days agoThe only thing constant in technology is change, except for unrealistic hopefulness

Edsger Dijkstra argued that the inherent ambiguities and slow evolution of natural languages were conclusive reasons to abandon any real idea of programming in human languages. He was right.

Software development

fromThe JetBrains Blog

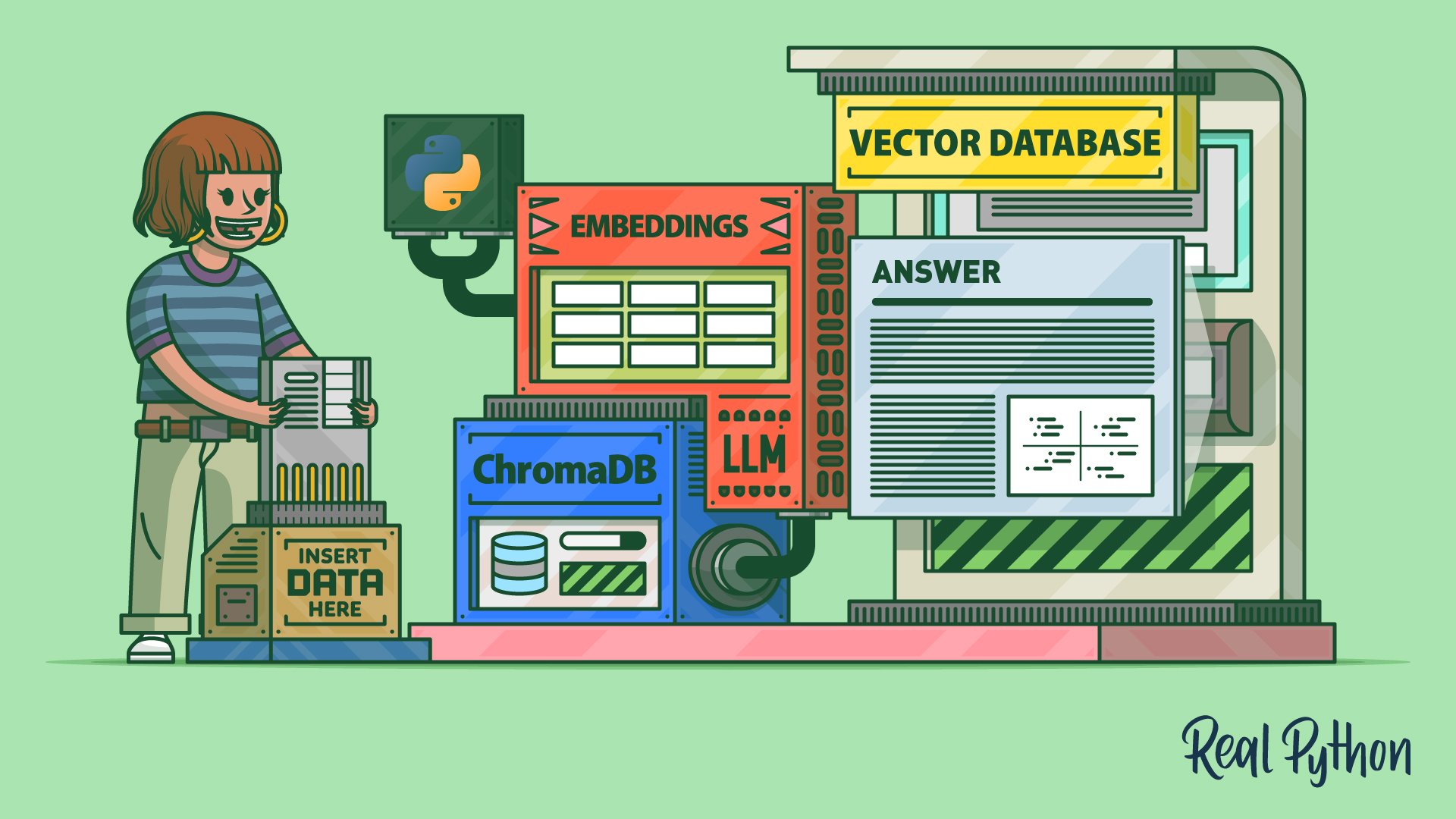

6 days agoUsing Bag-of-Words With PyCharm | The PyCharm Blog

The bag-of-words model is a text representation technique that converts unstructured text into numerical vectors by tracking which words appear across a corpus. Rather than preserving grammar or word order, it simply represents each document as a 'bag' of its words, recording how often each one appears.

Python

Marketing tech

fromSocial Media Explorer

1 month agoAI Voice Agents in 2026 - How Businesses Are Replacing IVR With Conversational AI That Actually Works - Social Media Explorer

AI voice agents significantly improve customer service efficiency by resolving issues directly rather than routing through traditional IVR systems.

fromTechCrunch

1 month agoGoogle is using old news reports and AI to predict flash floods | TechCrunch

While humans have assembled a lot of weather data, flash floods are too short-lived and localized to be measured comprehensively, the way the temperature or even river flows are monitored over time. That data gap means that deep learning models, which are increasingly capable of forecasting the weather, aren't able to predict flash floods.

Science

Artificial intelligence

fromEngadget

1 month agoGoogle brings Gemini-powered content creation tools to Docs, Sheets, Slides and Drive

Google is rolling out Gemini AI features across its productivity suite enabling users to generate first drafts in Docs, build spreadsheets in Sheets, design presentations in Slides, and query files in Drive using natural language prompts.

Marketing tech

fromNeil Patel

2 months agoVoice Search Ads Are Changing Google's Search Term Report

Voice search queries have grown from 2.8 to 9-10 words, shifting Google Ads from keyword matching to semantic understanding, requiring agencies to fundamentally rethink intent tracking and budget management.

Intellectual property law

fromPatently-O

2 months agoThe Recentive Ratchet: RPI's NLP Patent Falls to the New-Environment Rule

The Federal Circuit affirmed that a patent claiming natural language processing using case-based reasoning on a metadata database is ineligible under 35 U.S.C. § 101, establishing that applying established AI techniques to new fields does not overcome patent ineligibility.

fromMedium

5 months agoFrom Human Simulations to Work Companions: The Future of Human-AI Collaborative Intelligence

In the decades since natural language processing (NLP) first emerged as a research field, artificial intelligence has evolved from a linguistic curiosity into a catalyst reshaping how humans think, work, and create. Few people are as qualified to trace that journey, or to imagine what comes next, as Rada Mihalcea, Professor of Computer Science and Engineering and Director of the Michigan AI Lab at the University of Michigan.

Artificial intelligence

Artificial intelligence

fromeLearning Industry

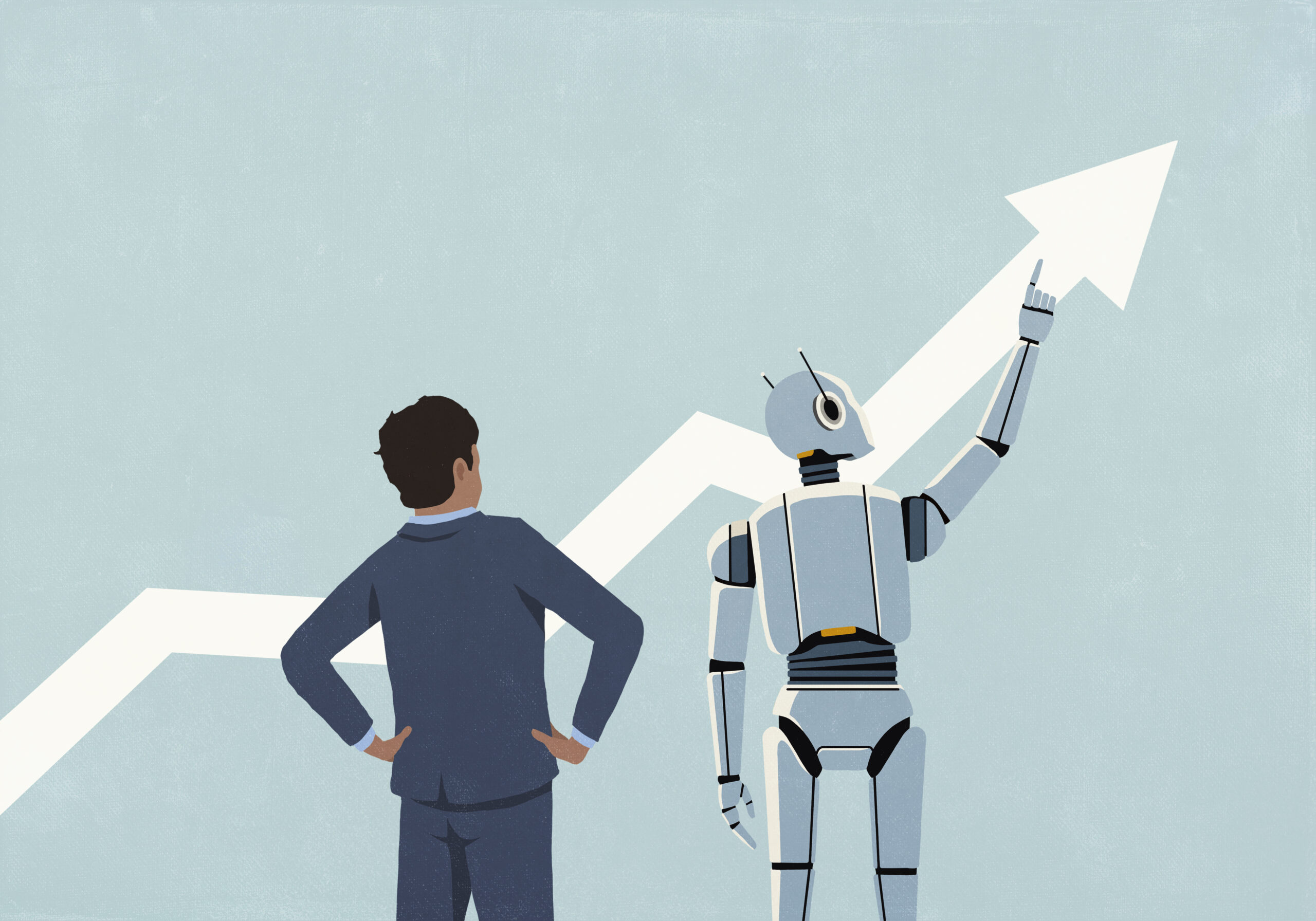

7 months agoAI And Machine Learning In ROI Measurement: Unlocking Advanced Analytics

AI enables precise, predictive, and actionable ROI measurement by processing vast learning data in real time, uncovering subtle correlations between learning behaviors and business outcomes.

Online learning

fromeLearning Industry

7 months agoUnlocking Emotions: How Sentiment Analysis Is Revolutionizing Personalized Learning

Integrating sentiment analysis into adaptive learning enables emotionally aware personalization that improves engagement, empathy training, and learning outcomes across industries like publishing and healthcare.

fromClickUp

10 months ago13 Best AI Intranet Search Tools for 2025 | ClickUp

Traditional intranet search engines struggle to keep up with the growing complexity of internal knowledge, with information scattered across tools like Google Drive, Notion, SharePoint, Confluence, Slack, and your project management platform.

Artificial intelligence

fromHackernoon

1 year agoEmpirical Results: GPT-2 Analysis of Transformer Memorization & Loss | HackerNoon

These experiments with GPT-2 medium on OpenWebText validate the radius hypothesis from our theoretical framework, measuring activation distances in the last layer for next-token prediction.

Roam Research

fromAmazon Web Services

10 months agoHow VideoAmp uses Amazon Bedrock to power their media analytics interface | Amazon Web Services

The collaboration between VideoAmp and AWS GenAIIC resulted in a prototype chatbot that utilizes natural language processing to analyze media analytics data efficiently.

Artificial intelligence

Growth hacking

fromGeeky Gadgets

10 months agoBuild Apps in Minutes with Claude Code Using Natural Language (No Code)

App development becomes effortless with Claude Code, enabling creation through conversation-like natural language commands.

Accessible design promotes usability for novices and experts, bridging the gap in app development.

Includes essential features like full content ownership and version control for enhanced project management.

Artificial intelligence

fromHackernoon

56 years agoMulti-Token Prediction: Architecture for Memory-Efficient LLM Training | HackerNoon

Multi-token prediction enhances language modeling efficacy by allowing simultaneous forecasting of multiple tokens.

Improved model performance scales with increased size.

Artificial intelligence

fromHackernoon

1 year agoApproaches to Counterspeech Detection and Generation Using NLP Techniques | HackerNoon

Counterspeech detection and generation are increasingly utilizing automated classifiers and transformer-based language models, enhancing responses to online hate speech.

[ Load more ]