"TurboQuant compresses the cache to just 3 bits per value, down from the standard 16, reducing its memory footprint by at least six times without any measurable loss in accuracy."

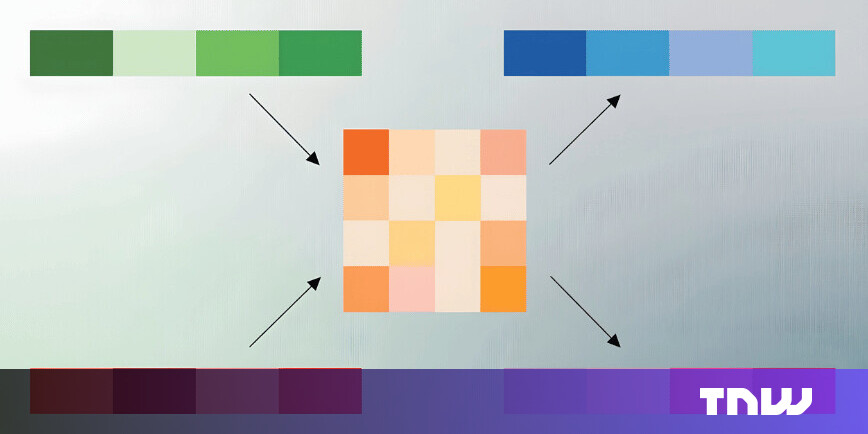

"Traditional quantization methods reduce the size of data vectors but must store additional constants, normalization values that the system needs in order to decompress the data accurately."

"TurboQuant's core innovation is eliminating the overhead that makes most compression techniques less effective than their headline numbers suggest."

Google introduced TurboQuant, a new compression algorithm that reduces the memory footprint of AI models by compressing key-value caches from 16 bits to 3 bits per value. This innovation addresses a major bottleneck in running large language models, allowing for more efficient use of GPU memory. The algorithm reportedly maintains accuracy while decreasing memory requirements by at least six times. TurboQuant builds on previous research and employs a two-stage process to enhance compression efficiency without the overhead of traditional methods.

Read at TNW | Corporates-Innovation

Unable to calculate read time

Collection

[

|

...

]