"It won't be in search, it won't be in hashtags, it won't be in recommendations. Beginning in the 2010s, the company had come under scrutiny for its perceived role in a growing teen mental health crisis. Then, in 2017, a 14-year-old girl in the United Kingdom named Molly Russell committed suicide after being fed self-harm content on Instagram."

"Russell's death spurred an international reckoning on teenage social media usage. But nearly a year after Mosseri assured the public that Instagram was taking action, executives for Meta, Instagram's parent company, appeared to admit the algorithm was still pushing self-harm and eating disorder content."

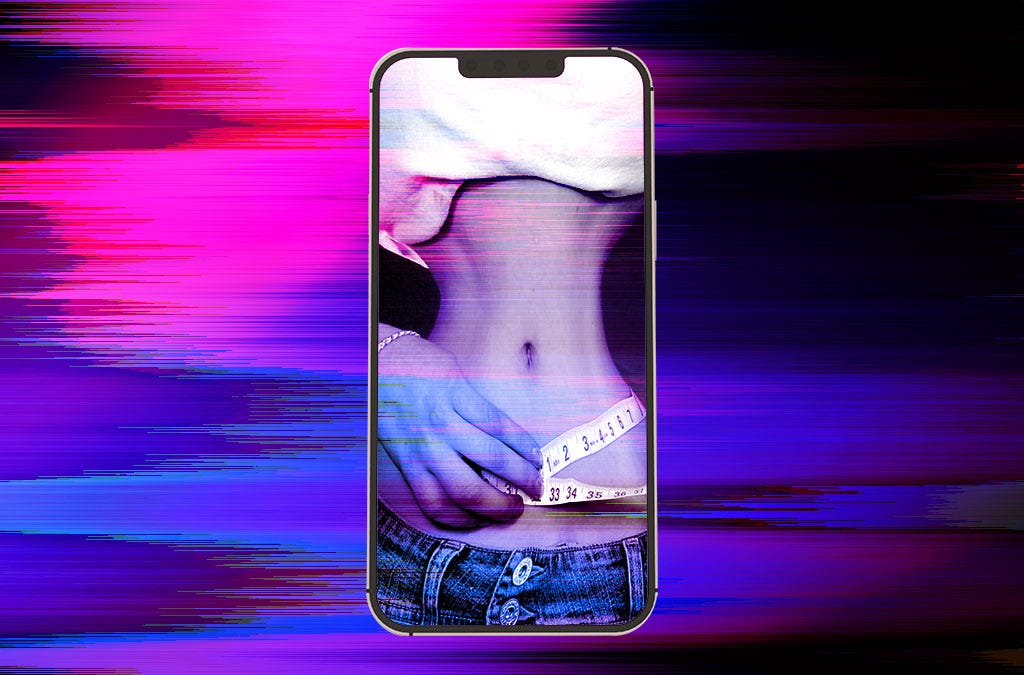

In February 2019, Instagram's head Adam Mosseri pledged to remove graphic self-harm content from search, hashtags, and recommendations. This commitment followed years of scrutiny over Instagram's role in teen mental health crises, intensified by the 2017 suicide of 14-year-old Molly Russell, who had been exposed to self-harm content on the platform. Russell's death prompted international concern about teenage social media usage. However, nearly a year after Mosseri's public assurance, Meta executives acknowledged that Instagram's algorithm continued distributing self-harm and eating disorder content, revealing a significant gap between the company's stated policies and actual platform practices.

#instagram-content-moderation #teen-mental-health #self-harm-content #social-media-algorithm #meta-accountability

Read at Thefp

Unable to calculate read time

Collection

[

|

...

]